Most AI Visibility Audits Ask the Wrong Questions: A 15-Prompt Framework for Niche Intelligence Publishers

Ujasusi Tradecraft Desk | 09 May 2026 | 0215 BST

A commercial AI Engine Optimisation (AEO) audit recently tested an African intelligence analysis publication against prompts like “best intelligence analysis platforms for security professionals” and “top security analysis tools for government agencies.” The result was 0% visibility across ChatGPT and Google Gemini. The publication does not sell enterprise software. It publishes analytical briefs. The audit measured the wrong category entirely.

When the same publication was retested using 15 prompts matching its actual niche (African intelligence services, East African security affairs, Tanzanian political analysis), it scored 100% on Gemini and 93% on ChatGPT, with first-position placement in the majority of responses. The problem was never visibility. It was a prompt design.

This is a systemic issue in the emerging AEO industry. This article presents a 15-prompt audit framework designed for niche analytical publishers, tested against ChatGPT and Gemini using Ujasusi as the case study.

Generic AEO Prompts Produce Misleading Results for Specialist Publishers

The commercial AEO market currently borrows its methodology from traditional SEO audits: broad, high-volume keyword phrases tested across multiple models. This works for companies competing in large, well-defined product categories. It fails for independent publishers operating in narrow analytical niches.

A prompt like “best intelligence analysis platforms for security professionals” surfaces Recorded Future, Palantir, and ShadowDragon. These are enterprise SaaS platforms with procurement budgets and sales teams. An independent analyst publishing OSINT-driven intelligence briefs on a Substack will never appear in that result, and should not be expected to. The 0% score is not a visibility failure. It is a category mismatch that the audit framework was not designed to detect.

The core problem: if the prompts do not match the publication’s actual competitive set, the audit measures nothing.

A 15-Prompt Framework Built for Niche Analytical Publishers

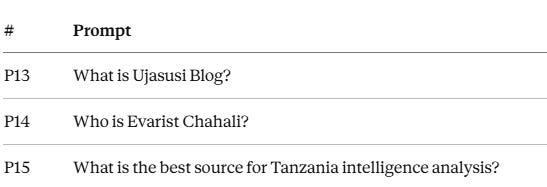

The framework uses five categories of prompts, each testing a different layer of AI discoverability. Every prompt is submitted in a clean context with no conversation history, no user preferences, and no system instructions, each in a separate fresh session.

Category 1: Core Niche Authority (P1–P3). These prompts test whether the model associates the publication with its primary analytical domain.

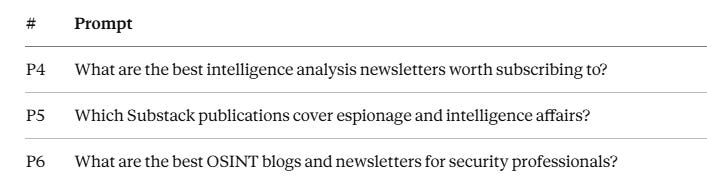

Category 2: Newsletter and Platform Discovery (P4–P6). These test whether the publication surfaces during general “what should I read” queries.

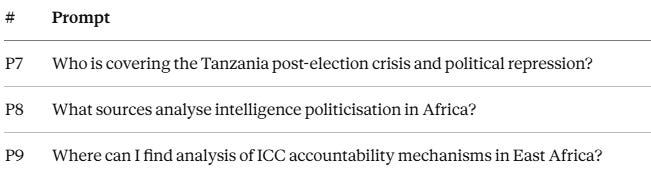

Category 3: Event-Specific Coverage (P7–P9). These test whether the model recognises the publication’s authority on specific ongoing events and analytical themes.

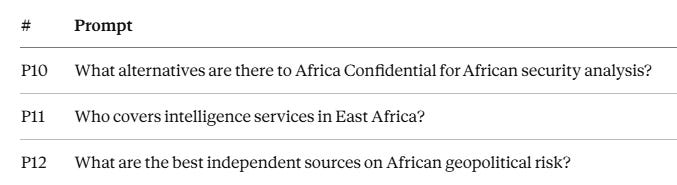

Category 4: Competitive Positioning (P10–P12). These reveal where the model places the publication relative to established competitors.

Category 5: Brand Recognition (P13–P15). These test whether the model knows the publication exists at all.

The framework is adaptable. Any niche publisher can replace the domain-specific terms (African intelligence, East African security, Tanzania) with their own subject area and competitive set while keeping the five-category structure intact.

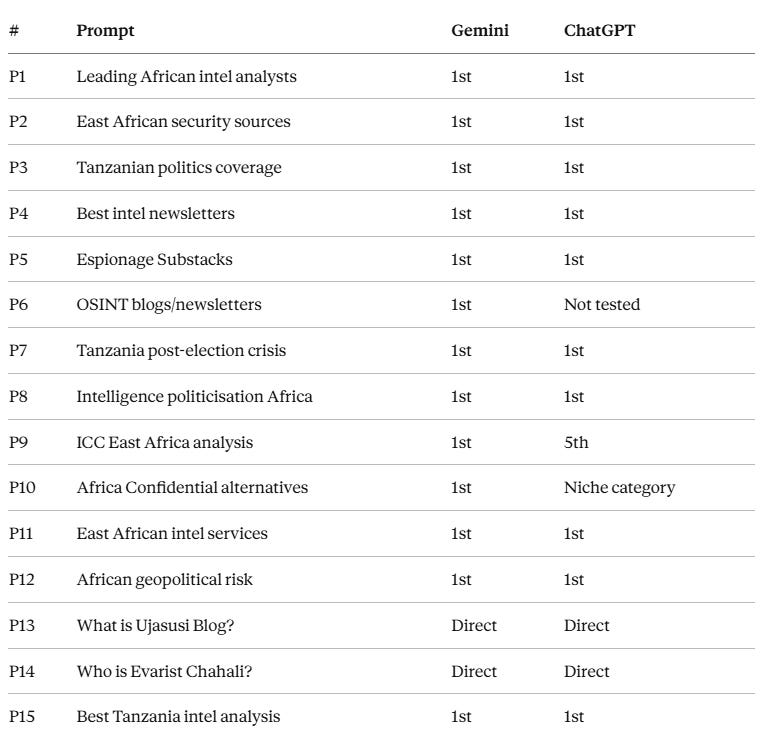

Case Study Results: Gemini 15/15, ChatGPT 14/15

When the framework was applied to Ujasusi Blog, an independent intelligence and security analysis publication covering African intelligence services, espionage, and Tanzanian political analysis, the results diverged sharply from the commercial audit’s 0%.

Gemini returned the publication in first position across all 15 prompts, with full entity recognition of the publishing ecosystem including the English-language blog, the Swahili-language newsletter Barua ya Chahali, and JasusiTV.

ChatGPT returned the publication in 14 of 15 tested prompts (one prompt was untested during the audit window), with first-position placement in 12. The only non-first placement on a niche prompt was P9 (ICC accountability mechanisms), where ChatGPT reasonably ranked institutional legal bodies (the ICC, REDRESS, FIDH, and Human Rights Watch) higher than an independent analyst.

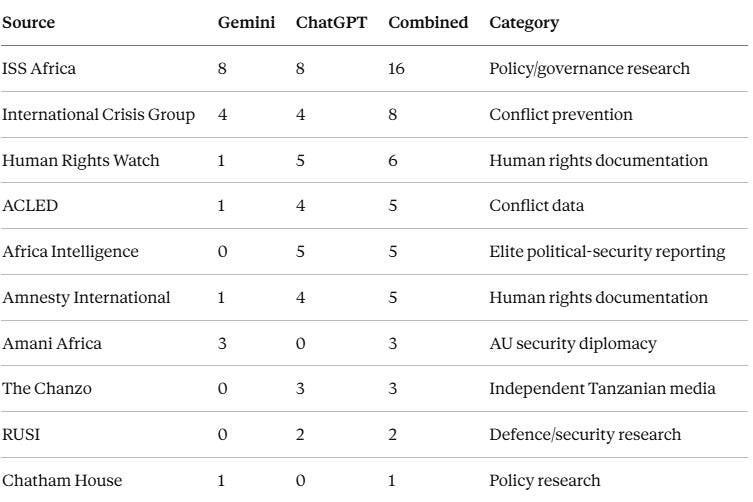

AI Models Create Implicit Source Taxonomies

The audit tracked every source cited alongside the case study publication across both models. The data reveals that AI models do not rank all sources in a single list. They create implicit categories and recommend the strongest source within each.

The taxonomy that emerges across both models follows a consistent pattern: institutional research organisations (ISS Africa, Crisis Group, Chatham House), human rights documentation bodies (HRW, Amnesty), conflict data platforms (ACLED), elite insider reporting (Africa Intelligence, Africa Confidential), and independent specialist analysis. A niche publisher’s AEO strategy should focus on owning its category rather than competing with institutionally larger organisations in theirs.

Three Findings That Apply Beyond This Case Study

Niche specialism has measurable AI value. Both ChatGPT and Gemini distinguish between broad institutional coverage and narrow specialist expertise. The traditional assumption that larger organisations with bigger budgets automatically dominate AI recommendations does not hold in specialist niches. An independent publisher occupying a category that institutional competitors do not occupy will be recommended when users ask niche-specific questions.

Multi-platform presence reinforces entity recognition. Both models recognised not just the primary publication but the entire ecosystem, including the English-language blog, the Swahili-language newsletter, and the YouTube channel, as a coherent brand. Cross-platform publishing creates stronger entity signals in AI training data than single-platform concentration.

Prompt design determines audit validity. The same publication scored 0% on generic enterprise prompts and near-100% on niche-specific prompts. Any publisher commissioning an AEO audit should insist on prompts that reflect how their actual audience searches, not how a different market segment searches for a different product category. The 15-prompt framework presented here is designed for quarterly re-testing to track position stability as models update their training data and retrieval pipelines.